Artificial intelligence has been on everyone’s lips for some time now. Press, radio, and television, as well as the Internet, have been reporting about “Deep Learning” and “Deep Neural Networks” since Google’s AlphaGo cracked the most complex board game known to us. This resulted in it clearly defeating one of the strongest Go players in a match over five games. The techniques used, along with a lot of fast hardware, provided an intelligent computing power that can be applied to a host of other problem areas.

Deep neural networks are revolutionizing research, industrial applications, and business opportunities in the areas of AI and machine learning. Deep learning causes machines to outperform people in areas traditionally considered difficult for computers, such as image recognition (facial recognition, cancer cell recognition in X-ray and CT images).

Deep neural networks are created from large amounts of relevant data representing inputs and outputs of a system. However, a deep neural network corresponds to a black box whose contents remain hidden, so the transparency of classic modular system architectures is lost.

The research work in DEEPLEE wants to change this. It builds on the DFKI’s expertise in the areas of deep learning and language technology and develops it further in the following areas:

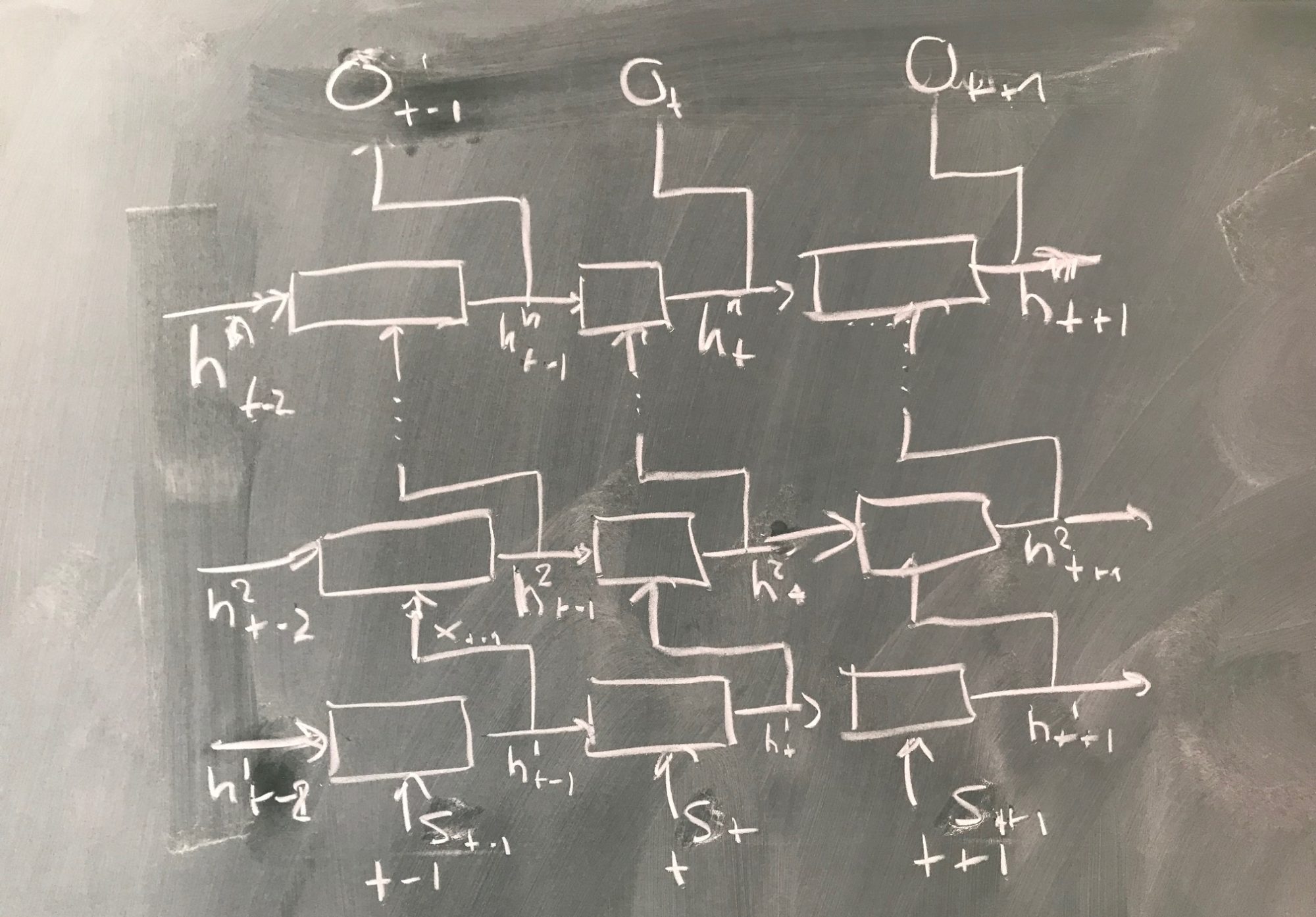

- Modularity in architectures of deep neural networks

- Use of external knowledge

- Deep neural networks with explanation functionality

- Machine teaching strategies for deep neural networks

The result of the research work will be a modular framework based on deep neural networks that enables end-to-end applications for three language technologies: information extraction, question answering, and machine translation. In end-to-end systems, instead of neural networks being learned from subcomponents and cast into a traditional system architecture, a (very large) network is created for the entire application.

—

Das Vorhaben DEEPLEE wurde vom 01.10.2017 bis 30.09.2020 mit Mitteln des Bundesministeriums für Bildung und Forschung unter dem Förderkennzeichen 01IW17001 gefördert.